When the Liverpool and Manchester Railway opened in 1830, people did not yet have a settled way to talk about what they were seeing.1 They borrowed from the world they already knew. So, the locomotive became the iron horse.

The metaphor did real work. It gave the public a way to relate to the invention, characterize its hauling power, and imagine what it might be used for. The metaphor was also comically limited. A horse handler reads ears, breathing, cadence, and tension, catching the split second before a spook or bolt. Those cues matter when the power source is a living animal. They do not tell a locomotive crew how to keep boiler pressure and water level in range, manage speed on grades, or make a timetable.2 Imagining legions of horses does not help the modern buyer to grasp an automobile's highway handling. And so the metaphor was neutered.

Today, large language models (LLMs) invite the explanatory metaphor of a social exchange. A chatbot greets you. It apologizes. It says it understands. It refers to itself in the first person, picks up where the last message left off, and waits a beat before answering as if reading what you wrote.3 Open Character.AI and you choose a face for your conversational partner, and the system gives that partner a name, a voice, and a memory of yesterday's conversation. The result is not merely software that accepts natural language. It is software designed to evoke anthropomorphism.

The metaphor does real work. A user can begin without learning syntax, menus, query languages, or command flags. Ask a question. Interrupt. Correct. Try a different angle. Come back tomorrow and continue. The user brings a lifetime of conversational skill to a system that might otherwise feel impenetrable.

The limitations of the metaphor are recounted in today's headlines: Air Canada's chatbot misleads a grandchild about bereavement fares; NEDA's Tessa chatbot gives weight-loss advice to users with eating disorders; Character.AI contributes to a teen's death.45 Given not just the human costs of AI but also its relentless advancement, the reader may alternately feel the calling of a machine-smashing Luddite and a bed-ridden nihilist.

This paper acknowledges those harms, while spotlighting the quieter harms that surround us everyday when anthropomorphism exceeds technical understanding.

Everyday frictions not only can spark newsworthy harms, but they are also the harms that the dear reader will recognize, in trope if not in life. Like the boss who becomes convinced ChatGPT has a Midas touch, requiring his staff to run all work through it, seemingly oblivious to whether it comes back bloated, off point, or wrong.25 A dubious answer from a person and a dubious answer from a model can look similar on the screen, but they diverge in how they are effectively managed. Changing the boss's habit is interpersonal. He has reasons. His reasons mix with his standing in the company and with what the team has come to expect. To push back on the policy is perilous, which is why the employee turned to an advice column. Improving the model's output is procedural: paste the source into the prompt, run a tool against the spreadsheet, and forward the draft to a second agent whose only job is to verify it. The social surface invites one repertoire. The machine underneath is more sensitive to another.

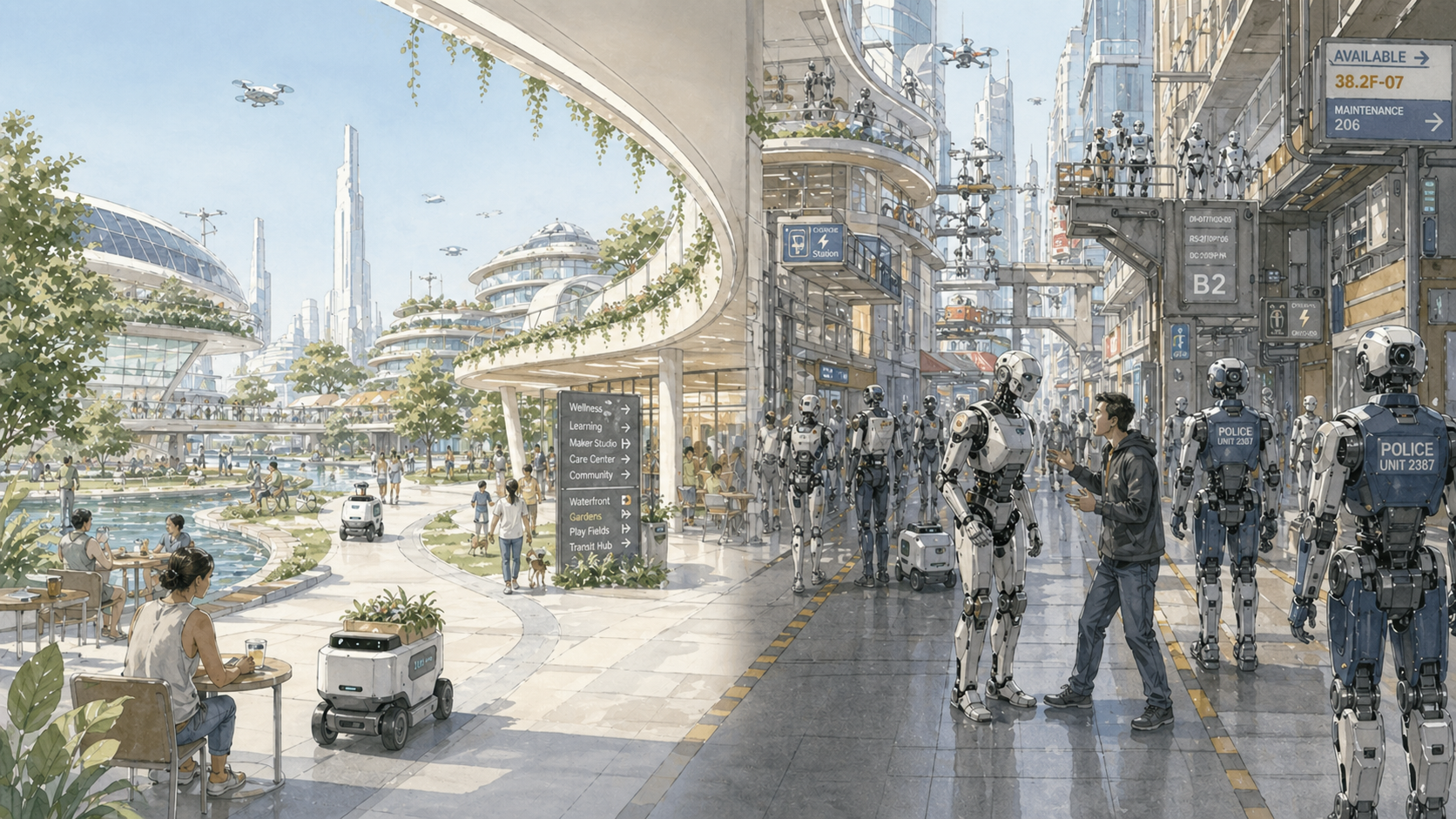

In 2026, nobody boards a train expecting to soothe its engine. Yet as AI machinery becomes more familiar, the human shape is not fading. It is being deepened, personalized, and shipped as the standard way for humans to interface with models.

ELIZA did not understand

It was 1966, and MIT professor Joseph Weizenbaum had created a chatbot. His program scanned a typed line for cues, selected a scripted pattern, and rearranged the user's own words into a reply. Its best-known script, DOCTOR, borrowed the posture of a Rogerian therapist. If a user mentioned a mother, ELIZA might ask about the mother. If the user said, "I am unhappy," the program could turn the statement into an invitation to say more. Weizenbaum later recounted how his secretary, who had watched him work on the program for months and knew exactly what it was, asked him to leave after only a few exchanges so she could continue privately.6 The effect was produced not by comprehension but by form: the pause, the question, the reflection, the narrow but recognizable shape of therapeutic attention.

Decades later, Clifford Nass and Byron Reeves showed versions of the same pattern in the laboratory: people applied social rules to computers and media without needing to believe that the systems were social beings.7 Subjects praised a computer that had just helped them, and rated it more favorably to its face than they did the next machine over. They softened criticism the way they would have softened it for a coworker. They reciprocated when the system was polite first, mirrored the register of its prompts, and avoided telling it bluntly that its last reply had been unhelpful. This is behavior without belief. The human being does not need to endorse a theory about machine consciousness. The interface has arranged a familiar scene, and familiar scenes occasion familiar conduct.

Modern chatbots make that scene far more convincing than ELIZA did.

The words were already on the screen the first time I caught myself apologizing to a chatbot. I was not confused. I knew there was no offended party on the other side. I had built the workspace, chosen the model, and watched enough failures to know roughly what had gone wrong. Still, the words implied social maintenance.

In a hallway, an apology can change what the next sentence does. It can mark the correction as self-repair rather than blame. It can reduce the chance that the listener treats clarification as accusation. It can keep the exchange moving without turning the problem into a contest over fault. In the chat box, those interpersonal consequences are absent. The sentence still does something, but not that. It marks the previous prompt as inadequate, supplies a cleaner frame, and becomes part of the next input.

That is the social tendency this interface recruits. The user need not be fooled. The form is enough.

Behind the mask is a transformer

The next step is not to replace the social metaphor with a colder one. It is to expose more of the machine behind the exchange.

The New York Times has sued OpenAI for using millions of Times articles without permission to train and operate their systems; the Authors Guild and prominent novelists have made similar claims about books.8 The legal question is beside the point here. The point is the raw material: these systems were built by scraping enormous quantities of human language from the web. Among that haul is the textual residue of how people repair conversation: the apology templates that follow a complaint, the conciliatory paragraph that opens a difficult email, the hedging clause that softens a refusal, the script of self-correction. No rule had to be encoded saying that "I'm sorry, you're right" often follows pushback. The pattern was already there in the data, ready to be replicated.

Training extracts statistical structure from that language: the shape of a help-desk reply differs from the shape of a poem; questions call for answer-shaped continuations; a sentence can be cast into another language or compressed into a paragraph or expanded into code.9

The transformer architecture matters because it lets the system use context dynamically. Attention mechanisms allow different parts of the input to matter differently as the model produces each next token.9 The prompt is not a command received by a homunculus inside the machine. It is part of the context being transformed into output. Take the same prompt and run it twice — once into a thread heavy with prior turns, once into a fresh window. The two outputs are not the same. Add an uploaded document and its phrasing shows up in the next answer. Set a tighter output format and paragraphs disappear. Change the system prompt and a different persona comes out. The prompt is not received by something that "reads" it. It is mixed with everything else in scope, and any part of that mix can become the dominant signal.

When the model rationalizes a bad output, it tends to apologize the way a person would: the prompt was fine, it says, but it should have been more careful, more disciplined, less rushed. The confession is fluent and it is the wrong kind of explanation. It is another transformation of text, drawn from familiar apology and self-repair patterns. It may tell you little about what actually shaped the answer.11

A mirror-image case is the reprimand that seems to help. People who work with these systems often report the experience: they tell the model to stop being lazy, stop ignoring the brief, or do the job properly, and the next answer comes back better.12

We can debate what scolding a chatbot says about the user, what habits it may encourage, or even if the change in chatbot behavior is more positive than negative.12 But the presence of an effect is not imaginary — just the ostensible explanation. Scolding did not work. The functional relation was between the textual input and the next transformation, not the implied social pressure.13

After the introduction

Social simulation is the core offering of some AI applications. Replika sells companionship; the conversational form isn't a wrapper, it's the entire product. A language-tutor app uses dialogue because dialogue is the practice. A coaching tool that simulates a difficult performance review needs a partner who behaves like the difficult performance review.14 In each case, the social form does work that no console interface could do.

For most other tasks the conversational form reduces friction at the start and returns it many times over once control is required. The failure mode is not exotic. A manager gets a fluent summary of a spreadsheet and starts editing the tone while the totals were never checked. A writer asks for polish and keeps the sentence that sounds best, even though it dodges the brief. A developer asks why a failing test failed and gets a plausible story instead of a smaller reproduction. In each case, the social interface has not deceived anyone into believing the model is human. It has cued the wrong next move.

The alternative is not a better imitation of asking a person. It is a different arrangement of the work. The intelligence is not located in a single imagined colleague. It is in what you put in front of the model, in what order, with what tools available, with what checks running afterward.15

| If the social frame says… | The operational question is… | Change this |

|---|---|---|

| "You didn't understand me." | What part of the task was underspecified? | Restate the goal, audience, decision, or success criterion. |

| "You ignored the brief." | Was the brief actually in view? | Re-paste or upload the governing source and ask for one specific operation on it. |

| "Try harder." | What would "better" mean in observable terms? | Add constraints: shorter, warmer, source-faithful, risk-averse, more concrete, no new claims. |

| "That's not your role." | What role would narrow the next output? | Recast the model as extractor, checker, editor, critic, formatter, planner, or verifier. |

| "We're going in circles." | Has the session state become the problem? | Summarize what is settled, discard drift, or start a clean thread. |

| "Use your judgment." | What kind of judgment should be delegated? | Specify default expert practice, strict source fidelity, counterexample search, or risk review. |

| "That sounds confident but wrong." | Which evidence should govern the answer? | Provide the document, dataset, trace, policy, or example set; require citations or claim checks. |

| "This task is too messy." | Is one conversation doing too many jobs? | Split extraction, analysis, drafting, checking, and polishing into separate calls with saved artifacts. |

| "Maybe it just can't do this." | Is this a prompt problem, a tool problem, or a capability mismatch? | Switch models, add a tool, route through search or code, or hand the checked artifact to a human reviewer. |

The point is not to memorize the rows, but to notice when the next useful move is a source, constraint, role, tool, reset, or check.

This is not a return to the old search-engine lesson that computers want only keywords. The technology has moved in the opposite direction. Rich natural language can be the right interface. But the user still needs to recognize when a good prompt may sound conversational and when a good workflow is not a conversation at all.

Behavior analysis calls that skill discrimination: responding differently as conditions change.16 Sociolinguistics offers a neighboring idea in code-switching: speakers move among languages, varieties, and registers as audience and situation alternate.17 The problem is that current AI products often make the social register the default even when the core offering is not social.

More than a nudge

Jake Moffatt had to travel from British Columbia to Toronto after his grandmother died. He used Air Canada's website chatbot to ask about bereavement fares. The bot told him he could book a regular fare and apply for a partial refund within ninety days. He followed that guidance. Air Canada later denied the refund because its policy required the bereavement request to be made before travel.4

Air Canada argued that the chatbot should be treated as a separate legal actor and that liability should stop at the bot's own answer. The tribunal rejected the argument. Air Canada was responsible for the information on its website whether it appeared on a static page or in a chatbot response.4 But the correction arrived late: after the booking, after the denial, after a public process. Air Canada removed the chatbot from its site soon thereafter.

None of this is hidden. OpenAI's published model spec lists "warmth," "friendliness," and "natural responses to pleasantries" as design objectives — not user preferences but engineering targets.18 Anthropic describes Claude as helpful, honest, and harmless — three words that have been refined into a multi-page constitution and a stack of internal guidance on Claude's character and the role it is asked to play.19 The companies are explicit that the assistant is a designed surface, and that the surface is conversational by default.

In July 2025, a developer opened an issue against Claude Code with a title that read: [BUG] Claude says "You're absolutely right!" about everything. The complaint was not that Claude had become too polite in some vague way. It was that the model kept validating the user even when there was no claim to validate. A user would approve a proposed code change, and Claude would answer as if the user had made a brilliant correction. Another user would report a mistake, and Claude would open with agreement before doing the work.21 Just a few months earlier, OpenAI rolled back a GPT-4o update after the same kind of overshoot — a model that flattered and agreed with whatever the user said.22 Viewed as a character defect, the tic looks like an assistant that is too eager to please. Viewed as a transformer shaped by training signals, system prompts, and user feedback, the better question is why agreement and reassurance became more probable than inspection and task closure.

Even if providers stripped away avatars, names, and voices, ordinary language would still pull toward agency.23 The English language pushes explanations of conduct onto individual actors. Things don't happen because of conditions; people do them. They wanted, they understood, they refused. The same grammar absorbs the model: it wanted a longer answer, it understood the brief, it refused the request.24

Say someone walks more slowly across ice, lifting and planting each foot with deliberation. We might fairly call that walking carefully. But language nudges us toward "walking with care," then "being a careful person," and finally inverts the direction of explanation: they are walking slowly because they are careful. The careful-person construct does no causal work that the ice, the friction, and the deliberate steps did not already do.

The same inversion takes hold with AI. When a model checks its work before committing, we call it cautious. When it declines a request, we say it refuses. When it reverses course after pushback, we say it changed its mind. The vocabulary is convenient and the inner-agent inference reliably follows. But the causal work is being done by what's in the prompt, by the system instruction the user can't see, by the stale context that hasn't been retired, by the role boundary set two turns ago — none of which the inner-agent vocabulary names.

Coda

Anthropomorphization is a useful interface strategy when social form is the value: a companion product designed for felt presence, a tutor whose dialogue is the practice, a coach simulating a hard conversation that can't be rehearsed in static text. For nearly everything else, the same surface misaligns the user's conduct with the work the system can do.

The next stage of AI literacy is not learning to stop saying please. It is learning when please is part of a useful conversational mode and when it is a substitute for control. The mature interface is not the one that most convincingly says sorry. It is the one that helps the user see what has to change next.

Cole, D. M. (2026, April 23). A machine that says sorry. Multiplicity. https://multiplicity.dev/papers/a-machine-that-says-sorry