A company called Frontera Health is hiring Board Certified Behavior Analysts (BCBAs) for a job called Clinical Prompt Engineer.1

The title is easy to pass over — hybrid job titles appear whenever a new technology reaches an old profession. But it is worth slowing down. Frontera raised $42 million in seed funding to build artificial intelligence (AI) for autism care.2 Its software records therapy sessions and analyzes video, audio, movement, speech, and interaction at thirty frames per second — beyond what a human observer can reliably see. Its clinical-report tools promise to cut hours from assessment writing. Its own clinics in rural New Mexico serve as the testing ground.3

The company is not led by behavior analysts. Its chief executive officer is a former partner at Kleiner Perkins, one of Silicon Valley's most powerful venture firms, whose son has autism.4 One co-founder and AI leader is an IIT-Delhi researcher who previously co-founded an AI autism-screening platform. The leadership is venture capital, product, engineering, and machine learning. Behavior analysts appear in the public story as users, validators, and now prompt engineers.

The role requires a current BCBA credential and years of clinical experience. The work is to develop and refine AI prompts so the software produces clinical output a behavior analyst would recognize. The field's expertise is genuinely needed. That is the interesting part. It is needed enough to be hired into the product. But it is being hired downstream — after the architecture, after the funding, after the product decisions have already been made. An ordinary hybrid title tells you where the new territory begins; this one tells you where the old territory ends.

This is not a story about one company. Frontera may build useful tools. Families in rural New Mexico may benefit. The sharper point is that the field whose science is built around behavioral measurement did not build the measurement stack first. Someone else noticed the problem, raised the money, hired the engineers, and then came back for the BCBAs.

That should bother us.

A lineage the field never claimed

Behavior analysis has an unusual relationship to artificial intelligence. It is not merely another health profession being asked to adopt AI. Some of the most important ideas in modern AI pass through territory behavior analysts should recognize immediately.

Reinforcement learning — the branch of machine learning concerned with how an agent learns from the consequences of its actions — did not appear from nowhere. Sutton and Barto's foundational textbook traces the lineage through Thorndike's Law of Effect and devotes a full chapter to connections with behavioral psychology.5 Reinforcement learning from human feedback (RLHF), used to align and improve large language models after pretraining, is routinely described by AI researchers as drawing on operant conditioning, animal behavior, and the Law of Effect.6 Harvard's Kempner Institute published a piece titled “From Lab Rats to Chatbots” stating that large language models learn “much like rats in a Skinner box.”7 The analogy oversimplifies — modern AI training involves much more than reinforcement — but the lineage is not disputed by the people who built these systems.

The people telling this story are computer scientists, computational neuroscientists, and science journalists. Behavior analysis has not made this lineage central to its own public account of AI. A field that helped give AI one of its central training logics is not substantially present in the room where those ideas became infrastructure.

That is the first strange fact. The second is that this absence would have been hard to predict at the beginning of the field.

When behavior analysts were builders

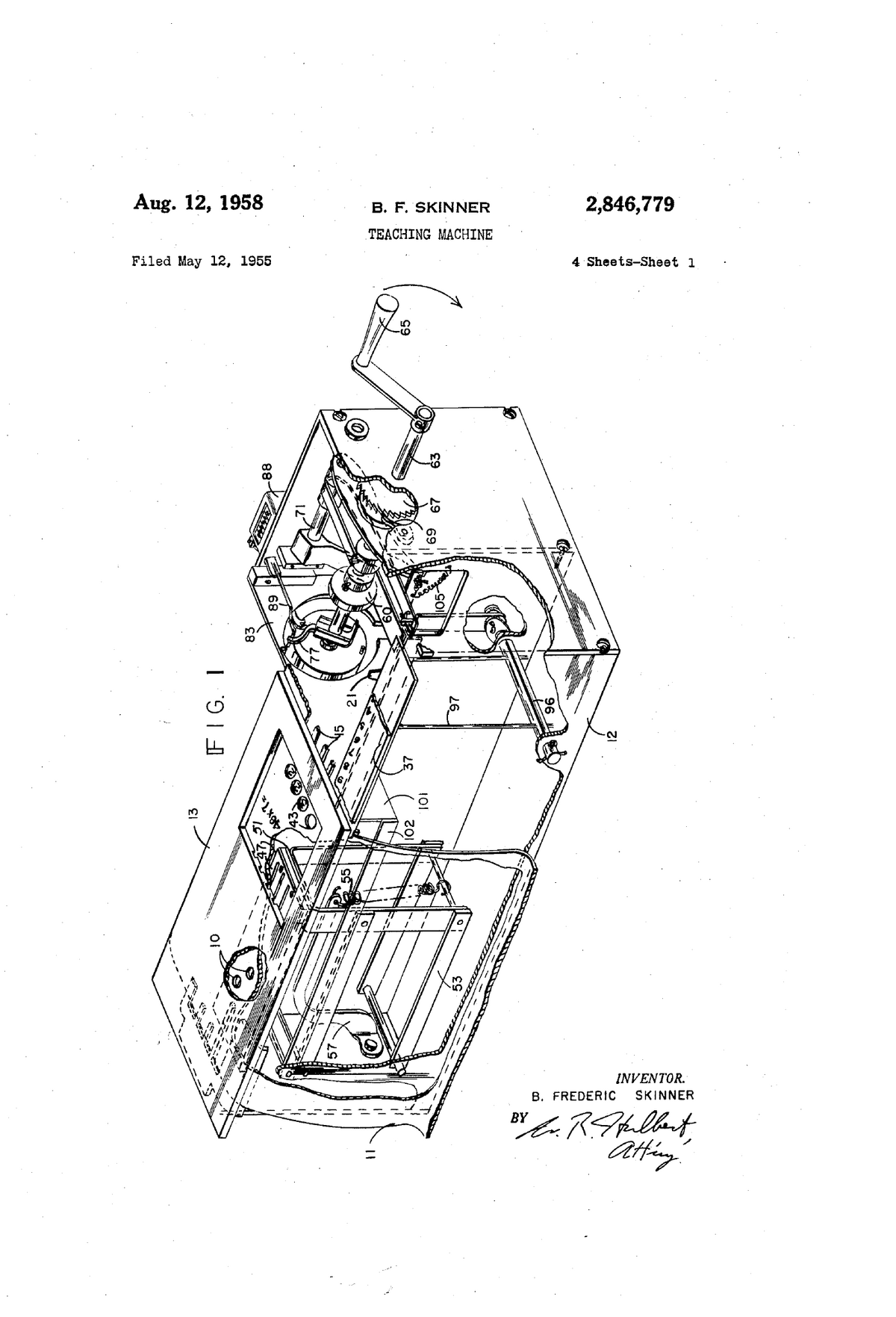

Skinner was not only a theorist. He was a builder.

The operant chamber was not borrowed from experimental psychology with a new label attached. It was engineered to solve a specific measurement problem: how do you observe behavior continuously, automatically, without relying on a human observer's memory, patience, or fatigue? In “A Case History in Scientific Method,” Skinner described the device as born partly from an engineer's impatience — “some ways of doing research are easier than others” — and partly from accidental discoveries that only continuous automated recording could have caught.8 An equipment malfunction that jammed a food magazine led to the observation of extinction curves. The science followed the instrument.

The cumulative recorder was not decoration. Skinner compared it to the microscope, X-ray camera, and telescope — instruments that made visible what ordinary observation could not.9 Before the recorder, behavior was counted in trials. After it, behavior was a slope: continuous, real-time, visually inspectable. That was not a stylistic choice. It was a measurement revolution.

In 1948, Skinner extended the engineering ambition from hardware to society. His novel Walden Two imagined a community designed on behavioral principles — positive reinforcement, designed environments, reduced aversive control.51

That ambition did not carry forward evenly into applied work. The move from laboratory chambers to classrooms, clinics, homes, and psychiatric wards made automatic recording harder. Applied behavior analysis gained social reach, but much of its measurement became manual again: observers with clipboards, interval sheets, counters, and later tablets. The data apps enhanced the clipboard. They did not recreate the automatic measurement ambition Skinner had already demonstrated.

Skinner was not an outlier. Building was normal in early behavior analysis. Ogden Lindsley built a human operant laboratory and framed free-operant measurement in engineering terms.10 Charles Ferster built automated programming equipment and ran tens of thousands of hours of automated behavioral research.11 Nathan Azrin — who used the language of behavioral engineering — built token-economy systems, toilet-training apparatus, habit-reversal protocols, and a community reinforcement approach that treated entire social environments as designable systems.12 Sidney Bijou engineered classroom arrangements.13 The founders treated instrumentation as continuous with science.

In 1968, Baer, Wolf, and Risley warned that applied work could degenerate into “a collection of tricks” if it lost principled connection to the science that generated its procedures.14 We are now living inside that warning.

Growth without instruments

In 1999, there were twenty-eight BCBAs.15 Today the Behavior Analyst Certification Board (BACB) credentialing system includes approximately 342,000 certificants across BCBAs, Board Certified Assistant Behavior Analysts (BCaBAs), and Registered Behavior Technicians (RBTs).16 In the United States, state-by-state autism insurance reforms — beginning with Indiana's mandate in 2001 and closing with Tennessee in 2019 — helped make ABA a reimbursed healthcare service nationwide,17 and that reimbursement system became the commercial engine of the modern ABA industry.

A profession can grow faster than its science. It can grow through billing codes, credential maintenance, utilization review, and compliance infrastructure while its measurement tools barely change.

Private equity entered the field heavily in the mid-2010s. More than sixty firms have been active in ABA acquisitions, and a large share of autism-related mergers and acquisitions between 2017 and 2022 involved private equity.18 The Center for Autism and Related Disorders became emblematic: acquired by Blackstone for roughly $600 million, it later collapsed into bankruptcy after operational strain, training reductions, and clinic closures, and was repurchased for a small fraction of that price.19 RBT turnover runs between 77% and 103% annually. BCBA burnout is reported at 72%.20 The causes are multifactorial and the numbers deserve careful reading, but the broad pattern is real: ABA is a labor-intensive service delivered inside systems that reward billable volume more clearly than scientific instrumentation. Claims data show ABA visit volumes grew by roughly 267% between 2019 and 2024, with the bulk of billing concentrated in a single technician-delivery code.21 The dominant commercial incentive is to move more hours through that code, not to measure what happens during those hours with greater fidelity.

This is the short-sighted part. The scientific methods that produced ABA's evidence base produced the advocacy, produced the state-by-state mandates, and produced the reimbursement stream that followed. The industry has been harvesting that dividend for two decades. It has not been reinvesting it in the measurement science that earned the dividend in the first place. A field that grows through mandate and does not reinvest in the instruments of its science gets the worst of both worlds: market dependence on the science alongside declining capacity to renew it.

The measurement problem sits inside that system. Many clinical data collectors have competing responsibilities during sessions — teaching, prompting, reinforcing, redirecting — while simultaneously recording what happened.22 The measurement methods vary by program: trial-by-trial accuracy, frequency, duration, latency, momentary time sampling, partial-interval recording, whole-interval recording, narrative ABC data. The important claim is not that one method dominates. It is that much applied measurement is constrained by human bandwidth, and those constraints are treated as normal rather than as a problem to engineer away.

The apps that replaced clipboards — CentralReach, Catalyst, Motivity, Raven, and others — improved many workflows and continue to add features.23 But the difference between digitizing clinical paperwork and rebuilding the measurement apparatus is the difference between a faster clipboard and a new instrument. The field has built the former, not the latter.

The instruments are being built elsewhere

Elsewhere, the instruments are being built — just not by behavior analysts.

The pattern closest to ABA's daily work is in automated behavioral coding. Animal-behavior researchers already have open-source tools — SLEAP, DeepLabCut, A-SOiD — for markerless pose tracking, multi-animal identification, and expert-guided behavior classification that are more sophisticated than what clinical ABA uses for human behavior.24 These systems do not just count behavior. They track movement at frame-level resolution, classify behavioral states in real time, and let researchers define the response classes that matter rather than accepting vendor defaults. The DeepLabCut project alone has grown into an open-source ecosystem — shared models, active extensions, training resources, and a community of contributors — of a kind that clinical ABA has no counterpart for. The technology for continuous automated measurement of complex behavior exists. It is being used on zebrafish, mice, and primates. It is not being used on therapy sessions.

Ambient medical scribes are already reducing documentation burden in medicine, with a Permanente Medical Group deployment reportedly saving physicians close to sixteen thousand hours of documentation time and improving patient-physician interaction,25 and peer-reviewed evaluations of ambient AI scribes showing meaningful reductions in clinician burnout.26 Open-source speech and vision models can transcribe and tag clinical interactions. The infrastructure for turning a session into structured, searchable, analyzable data is no longer a research prototype. It is commodity technology waiting to be configured for behavior-analytic problems.

The screening and assessment layer tells the same story from the outside in. At Duke University, Geraldine Dawson and Guillermo Sapiro developed SenseToKnow, a tablet app using computer vision to quantify social attention, facial dynamics, head movements, blink rate, and response to name in toddlers. Published in Nature Medicine in 2023, it achieved strong autism-screening performance from a ten-minute assessment, and the technology has been licensed to Apple.27 Dawson is a clinical psychologist. Sapiro is an electrical engineer. No behavior analysts appear in the project. A nine-university consortium funded by a $20 million NSF grant — the AI Institute for Exceptional Education — is building AI tools for children with speech and language disorders, including autism.28 These are not ABA projects, and behavior analysts are not usually diagnosticians. But the broader pattern is clear: the measurement layer around autism and developmental disability is being rebuilt by engineers, psychologists, and AI labs, and it will not stop at screening. It will reach treatment delivery, outcome tracking, and service authorization — the places where ABA lives.

The venture-capital picture confirms the direction: global digital mental health attracted $2.7 billion in 2024, a 38% year-on-year increase, with autism-specific companies raising tens of millions each.29 Meanwhile, the technology layer inside the ABA market itself is still dominated by practice management — scheduling, billing, documentation — not measurement science.

Capital has noticed that behavioral measurement is a solvable problem. It has not waited for behavior analysts to solve it.

When the dial belongs to someone else

The quieter threat is not competition. It is loss of control over the instruments that define clinical reality.

Payers are deciding which measures count. In 2025, the National Academies concluded that TRICARE's ABA outcome measures — the Vineland-3, SRS-2, and PDDBI — were not validated for the purpose of measuring outcomes in the autism population to which they were applied, and recommended halting the requirement.30 These were payer-chosen assessments imposed on a behavior-analytic intervention.

The next generation of this problem is algorithmic. RethinkFirst markets a patent-pending AI dosage calculator trained on a large dataset to recommend ABA service hours to utilization reviewers.31 The provenance of such training data matters: historical authorization patterns already reflect payer preference, regional variation, and the biases of previous reviewers, which an algorithm trained on them will inherit and generalize. The decision layer — how much therapy, for how long, reviewed by whom — is being built around the field rather than by it. When the instrument that determines a child's therapy hours is designed outside behavior analysis, trained on data the field does not control, and operated by reviewers who are not behavior analysts, the field's expertise becomes advisory. It reviews. It appeals. It writes narratives. It does not set the terms.

And families will not protect the field from that shift. The parents who fought for ABA mandates fought for their children, not for a credential. Retention in ABA services stands at 46% at twenty-four months.32 Naturalistic developmental behavioral interventions are gaining evidence and adoption. Speech-language pathologists are delivering NDBI strategies under their own billing codes, bypassing ABA's insurance structure.33 If a parent sees a competing service that offers continuous progress data, clearer communication, and a therapist whose full attention is on their child, they will not ask which field has the stronger theoretical lineage. They will ask whether their child is helped. The pressure from the payer side and the pressure from the family side point in the same direction: a field that does not shape its own measurement instruments will find its authority squeezed between the two.

Topography is not function

A camera can detect that a child fell to the floor. A model can learn that floor-dropping happens more often during academic demands. A dashboard can count duration, latency, and co-occurring events. All useful. None sufficient.

Suppose the floor-drop produces escape from demands. The intervention is to modify the demand or teach an alternative response that achieves the same outcome. Now suppose the same floor-drop produces adult attention — the therapist turns, leans in, speaks. Same topography. Different controlling relation. Opposite intervention. A system that cannot distinguish what the behavior does in the environment cannot distinguish these two cases. It sees a child on the floor. It does not see why.

Behavior analysts work with black boxes. So, it is worth noting, do the AI engineers whose systems now dominate the technology conversation. Both traditions face the same practical problem: how do you steer a system whose internals you cannot inspect? The behavior-analytic answer has been to work at the level you can actually change — antecedent, response, consequence, and the contingencies that hold them together.

In 1959, Teodoro Ayllon and Jack Michael turned the psychiatric nurse into a behavioral engineer.34 On a locked ward where patients had been labeled unteachable, they redesigned the environment: prompts were changed, reinforcement was made contingent on behavior that had previously been ignored, tasks were broken into smaller steps that could be taught and reinforced in sequence. Patients began to learn. The point was not that these patients had no inner life. It was that the environment was where the leverage sat, and that a cohesive, repeatable method for arranging environmental contingencies was what the clinical team could actually do.

What changes now is not the level of analysis. It is the range of variables at that level. A room that can sense gaze, vocal affect, proximity, and response timing at frame resolution is a room where a far larger set of environmental variables becomes legible to a clinician. A room that can cue, prompt, or respond automatically is a room where the environment itself can be made to play its part. These are expansions of what an environmental approach can do — which is to say, expansions of what behavior analysis has been doing all along.

That same logic is the foundation of functional analysis, the method the field uses to decide what to do about a problem behavior. Its payoff is not philosophical; it is clinical. Treatments tied to a correctly identified function repeatedly outperform treatments that are not.35 The empirical base is substantial: the method's foundational report aggregated more than one hundred and fifty single-subject analyses, and reviews spanning four decades find reliably differentiated outcomes in the large majority of cases.36 That is not a methodological preference. It is an outcome difference.

What functional analysis does that prediction alone cannot is arrange the contingency on purpose and watch whether the behavior responds. Rich observational variability can narrow the candidate set — if a behavior sometimes precedes escape, sometimes attention, and sometimes tangible delivery, a good model may even propose a primary function. But identifying which of those arrangements would actually change the behavior, for this person, in this setting, requires changing one of them and observing the result. Judea Pearl put the formal version succinctly: association asks what correlates with what; intervention asks what happens when you change something.37 Most machine learning lives on the first rung. Functional analysis lives on the second.

The field's comparative advantage, then, is not a better prediction algorithm, and it is not a monopoly on the idea of function — adjacent fields reason about function too. It is that behavior analysis has worked longer and more systematically at building the structures that identify controlling relations reliably: experimental conditions, single-subject designs, contingency analyses, treatment-fidelity checks, and the institutional habit of tying treatment to what the test showed. That advantage is only real if it shows up in the instruments. If the field does not carry those structures into the measurement systems that clinicians, payers, and families rely on, those systems will default to what they measure most easily, which is topography. The field's most important contribution will become invisible at the exact moment measurement becomes more powerful.

A Tuesday afternoon, rebuilt

What would it look like to build instead?

A Tuesday afternoon. A seven-year-old and his therapist are in a clinic room. The room has multiple cameras mounted to reduce blind spots, including during physical prompting. A small edge-computing device — roughly the size of a book — runs video and audio analysis locally. Pose estimation — software that tracks body position — is calibrated to clinically relevant response classes, such as falls, head movement, proximity, or self-injury. Speech analysis is tuned to the child's limited and idiosyncratic vocalizations. Both run on the device. Nothing leaves the room as raw video. The therapist wears a small earpiece. She is not looking at a tablet. She is not marking intervals on a data sheet. She is focused on the child.

The system tracks the therapist too — not as a supervisor watching for mistakes, but as a co-therapist executing the program the behavior analyst has already designed. It checks prompt timing, reinforcement latency, and prompt hierarchy against the plan. When the planned next trial is due, it cues her. When a maintenance target has gone too long without a probe, it flags it. When the child begins to show the precursors to escalation the analyst asked it to watch for, the earpiece delivers a quiet word. The behavior analyst sets the rules. The system keeps them.

After the session, the behavior analyst opens a dashboard. The session appears as a dense time series. Problem behavior increased when demand density rose after minute twelve. Reinforcement latency drifted from two seconds to five over the session — treatment-integrity data invisible to an observer collecting interval data on the child alone. Unprompted manding climbed in the final ten minutes, and the trend across the last eight sessions shows as a slope rather than a score. A pattern appears that no interval sheet could have revealed: problem behavior did not rise with demands in general; it rose when demands followed rapid therapist attention, and fell when the same demands followed a quieter transition. Another pattern, quieter but just as useful: the child's vocal approximations of a target request increased fourfold during the minutes when the environmental noise floor dropped below a threshold the analyst had flagged as relevant. Neither pattern was the one the program was primarily written to detect. Both are inspectable because the density of the data makes them inspectable. The behavior analyst reads the patterns through a functional lens, because she is the one who told the system what to surface and what to ignore.

None of this displaces what only a human can do in this room. Autism spectrum disorder is diagnostically defined by persistent deficits in social communication and interaction alongside restricted and repetitive patterns of behavior. In practice, some associated behavior patterns — elopement, self-injury, aggression — can pose concrete safety risks.38 A room full of sensors is still a room where the child's repertoire is being built, moment to moment, by another person. The sensors do not replace the therapist whose attention is on the child. The sensors are there so the therapist's attention can stay on the child — not on a data sheet.

The hardware is not exotic. Cameras, microphones, and edge devices are cheap enough for serious pilots — small, deliberately limited trials designed to learn whether a system is useful before a clinic scales it. The minimum hardware can be under two thousand dollars.39 Louisiana has passed legislation requiring cameras in special education classrooms, effective February 2026. Texas allows them on parent request. The recording infrastructure is arriving whether the field builds on it or not.

What sets that infrastructure apart from a generic surveillance rig is the design work behind it. What counts as a response class, how antecedent events are tagged, whether the system tracks therapist behavior, how treatment integrity is operationalized, how generalization is measured, how the family accesses the data — these are behavior-analytic decisions, not procurement choices. If behavior analysts make them, they build demand for behavior-analytic expertise. If they do not, demand shifts to whoever owns the instrument.

And ownership matters. If every clinic rents this future from a proprietary vendor whose categories, defaults, and data access rules it did not define, behavior analysts become dependent on private systems they cannot inspect or modify. The field should aim at open protocols, shared data schemas, interoperable exports, and open-source reference implementations where possible. Companies can and should build products. But behavior analysts have to demand exportability, inspectability, and behavior-analytic categories before procurement locks in someone else's defaults. A science of behavior cannot be comfortable if the basic instruments of behavioral measurement exist only as vendor property.

ABA serves many young, disabled, nonverbal, or dependent clients. The data are intimate. The power asymmetry is real. A sensor-rich therapy room raises privacy and governance questions that a field taking its work seriously will have to answer — and better to answer them at design time than at deployment.40

Two versions of the job

Imagine the behavior analyst's job five years from now.

In one version, the trend toward remote, software-mediated supervision continues.41 RBTs collect data in apps that remain conceptually close to clipboards. AI drafts reports, mostly to satisfy payers. A utilization-review algorithm recommends hours before the behavior analyst has made the clinical case. The profession survives for a while as a service layer inside systems built by others. Over time, the parts of the role that are easiest to specify — documentation, summaries, dosage recommendations, compliance checks — become the parts easiest to automate or move elsewhere.

In the other version, some behavior analysts become behavioral engineers. That title should not mean a clinician who knows how to ask ChatGPT for a task analysis. It means someone who understands behavior analysis deeply enough to know what must be measured, and understands modern tools well enough to make the measurement real. They know that a topography detector is not a functional analysis. They know why treatment integrity matters. They know when data density is meaningful and when it is noise dressed as precision. They can look at an AI-generated pattern and ask the behavior-analytic question: what environmental relation would make this useful?

That kind of clinician has to come from a different training pathway. Baer, Wolf, and Risley's warning applies directly here:14 techniques without generative principles can be repeated, but they cannot adapt when the problem changes, and they cannot produce new techniques when the tools do. A behavioral engineer is only as useful as the principles they can reason from. The field that wants more of these clinicians has to train people in the generative science — not a branded curriculum — deeply enough to build from it. That means conceptual systems alongside computational literacy: enough understanding of the principles to know what the measurement should look like, and enough fluency with modern tools to help build it. Neither half is optional. A clinician with deep principles and no tools designs measurement that never ships. A clinician with tools and no principles builds fast, confident instruments that measure the wrong things.

That is the clinician I would want when a family I cared about needed a behavior analyst. That person is hard to find in ABA right now.

The reason is partly the shape of mid-career professional development. Behavior analysis has a layer of brand-named method certifications — fidelity-checklist programs built around a single protocol or originator, with tiered levels and supervisor sign-offs — that clinicians pursue to advance.52 These certifications train clinicians to implement a specific protocol with high fidelity. Fidelity matters; it is also a technician's standard. A behavior analyst's standard is to experiment from the principles. A credentialing culture that treats permission-to-modify as a gift from the originator, rather than the ordinary work of the analyst, produces high-fidelity technicians rather than analysts.

Neither version arrives pure. The actual future will be a mixture — large platforms that embed behavior-analytic expertise as a service layer, boutique practices and university labs that build their own stacks, payer-owned decision tools that keep operating whether or not the field engages with them, a small open-source community that builds reference implementations used by a few. The question is not which version wins. It is where inside that mixture the field's authority will end up, and what share of the design decisions will have been made by people trained in the science.

The barriers are real. Current billing codes do not reimburse time spent building measurement infrastructure.42 The BACB task list includes no technology competencies.43 Healthcare AI has a poor deployment record: the Epic Sepsis Model, deployed across hundreds of hospitals, was later found to perform poorly in independent validation.44 Computer vision degrades with occlusion — common during therapy with young children.45 Education has its own cautionary failures.46

Those are not reasons to stop. They are reasons for ordinary clinical organizations — the typical multi-site provider, the single-clinic BCBA owner, the university training program — to start small. A clinic can test whether AI-assisted documentation reduces report time. A provider can test whether automated transcription improves supervision. A university clinic can test whether computer vision detects treatment-integrity drift. These are not moonshots. They are the kind of controlled comparison behavior analysts already know how to run.

The reason to start is not abstract. It is competitive. The provider that discovers a workflow where one behavior analyst with better measurement tools supervises more effectively than two behavior analysts with clipboards has a real advantage over the clinic next door. If a new role — say, a behavior analyst with technical fluency embedded in the supervision workflow — returns its cost through better authorization evidence, lower documentation burden, or earlier detection of treatment-integrity drift, that provider has a reason to invest more. If it does not return its cost, the provider stops. That is not failure. That is the selection process the field should understand.

Scale the logic up. If enough providers run enough small experiments, the ones that work get copied. The ones that fail get dropped. The field as a whole becomes more diverse — not just autism therapy, but behavioral measurement, system design, outcome architecture, treatment-integrity engineering. Some behavior analysts will sit closer to engineering teams and build the instruments. Some will sit closer to policy and define what the instruments are allowed to do. Some will keep doing high-fidelity clinical work with better tools than they have today. The monoculture breaks by distributing expertise across those roles, not by forcing every clinician down the same pathway. That diversification is not a luxury. For a field that has concentrated its professional identity in a single service line inside a single payer system, it is survival. A species that occupies one niche dies when the niche changes. A field that understands selection by consequences should not be afraid to let consequences select its next professional forms.

Diagnostic, not insult

There is a temptation to frame all of this as a threat story. AI will come for ABA. That is too crude.

The more interesting possibility is that AI will expose what behavior analysis has and what it has neglected.

It will expose that the field has a more serious account of environmental arrangement than most adjacent disciplines — that its best work has always been about finding leverage in the relation between person, action, and world. It will expose that functional analysis is not mere prediction — it is prediction tested through intervention. Asking what happens when the environment changes is a different and more useful question than asking only what correlates with what. It will expose that single-case logic is suited to individualized, streaming, intervention-sensitive data.

It will also expose that the field has allowed too much of its data to be privatized — enormous clinical data streams inside platforms, no public research commons, no shared dataset comparable to those that transformed other fields.47 The open-science gap is concrete: as of April 2026, JABA is listed by PubMed Central as no longer participating, with coverage ending in 2012, and NLM Catalog marks both JABA and JEAB as PMC Inactive.48 Adjacent disciplines moved toward open access. The field's flagship journals moved away from it.

The public agenda-setting gap is equally concrete. The Association for Behavior Analysis International (ABAI) holds one of the largest annual gatherings in the behavioral sciences. Between 2019 and 2025, its presidential and Presidential Scholar addresses covered climate change, domestic violence prevention, nonviolent social action, science communication, giant rats trained for landmine detection, cultural evolution, and translational research — all important topics, none about the technological transformation reshaping every adjacent field.49 In its July 2024 newsletter, the BACB illustrated generative AI use with an example of refining a task analysis for toothbrushing. Compare that with the American Psychological Association's Council of Representatives, which approved a formal AI policy by a reported 156-2 vote, or the American Speech-Language-Hearing Association, which maintains an Innovations in Technology convention topic area that explicitly includes AI, machine learning, and large language models.50 The disparity is not about blame. It is about priorities made visible.

These exposures are not insults. They are diagnostics. A field that knows what it has — and is honest about what it has neglected — can still choose to build.

Frontera's Clinical Prompt Engineer job posting is useful precisely because it is not an insult. It is ambivalent. It shows that behavior-analytic expertise has market value. It also shows where that expertise may be placed if the field does not build its own instruments: after the model, after the product, after the capital, after the architecture.

Behavior analysis was born when its founder built an apparatus because existing methods could not answer the questions he cared about. It matured through people who treated measurement, intervention, and engineering as continuous with science. Its defining paper warned against becoming a collection of tricks. Its core contribution is not a credential, a billing code, or a therapy brand. It is a way of treating behavior as lawful without pretending the inner machinery is transparent.

The field does not need to chase every AI trend. It does not need a chatbot for everything. It does not need to pretend that all technological change is progress. It needs to build the instruments that make its own science visible in the world now arriving.

If it does, the future behavior analyst may be more important than the current one: not less human-centered, but more capable; not replaced by measurement, but freed by it; not a prompt engineer in someone else's stack, but an architect of systems that know what behavior analysts mean by behavior.

If it does not, the tools will still be built. Cameras will enter classrooms and clinics. Payers will use algorithms. Parents will follow the services that seem to help. Engineers will discover that behavior is measurable. Companies will hire behavior analysts.

But they may need fewer of them, in narrower roles, with less authority over the systems that define care.